I know it’s been just one week since the official 12.11 release, but I’ve had reason to make some changes already! The extreme weather gave an excellent testing opportunity, and brought into focus some suspicions I was already having about the learning routine. Then, they called off school (too cold for Atlanta kids, I guess: 6 degrees F (-14.4 C) this morning), so I spent most of the day carefully researching, testing, and implementing some changes.

First, I’ve been having a hard time wrapping my head around some of the statistical/probabilistic issues with the learning routine, especially the “slope” factor. This control whether the temperature wants to “hug” normal more closely, or feel more free to “wander”. It actually doesn’t have much effect in normal weather, but (as I really found out here) can have a large effect when there are large temperature excursions from normal.

I had it mostly (80:20) paying attention to error versus departure of forecast from normal, as opposed to paying attention to error versus actual departure from normal. I felt this made sense, as the correction is of course acting on the forecast (not the actuals - now that would be something!!!). I was aware of a possible problem due to multiple forecasts in one day (you may have seen the little slanted trains of dots on one of the graphs), but artificial examples I was using to study it showed only a small difference between the two approaches. Real data (some my own, but most of it from some of you … thanks!) showed the difference was actually significant in many cases. I finally realized that it’s not just multiple forecasts in a day, but the whole idea of basing the corrections off the departures from normal for forecasts that have “random” error, as well as systematic error, which causes the problem. To complicate matters some, that’s not all bad, because the non-random component still has some value. It’s ironic, but random error was systematically producing a bias!

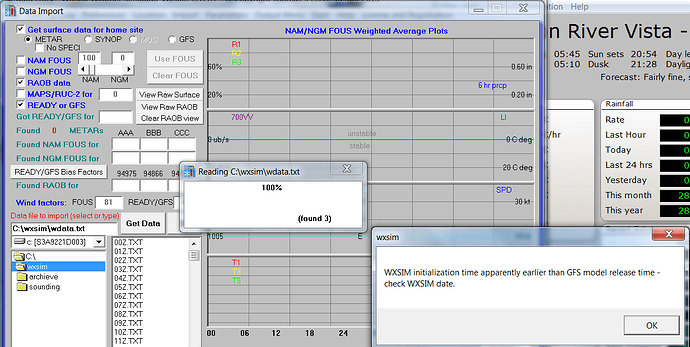

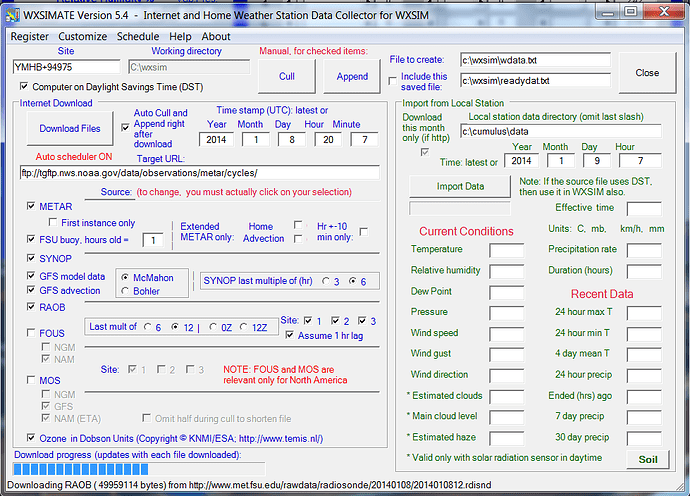

Basing this correction factor off actual departures from normal avoids this bias, but also loses some valuable information. I couldn’t seem to decide which approach is better, and was thinking of splitting the difference (50:50), but fortunately has extreme test data (having saved the wdata.txt files from some runs over the last few days), so I was able to empirically arrive at a weighting tentatively 60% actual (though with a correction applied to make it relevant for a function of forecast departure from normal) and 40% forecast. My “retrocasts” are now excellent, generally within a degree or two of the 6 F low and 26 F high we had today.

I also made a change in WXSIM, to improve a protection against bad extrapolations. I came up with a sort of “bell” shaped function which smoothly weakens the correction factors out in the range where data is sparse, so that in very “unfamiliar territory”, it leans back towards what it would be doing without the correction factors (but not completely).

I know that’s a bunch of technical stuff, but I’ve been thinking about it all day, and thought I’d share! ![]()

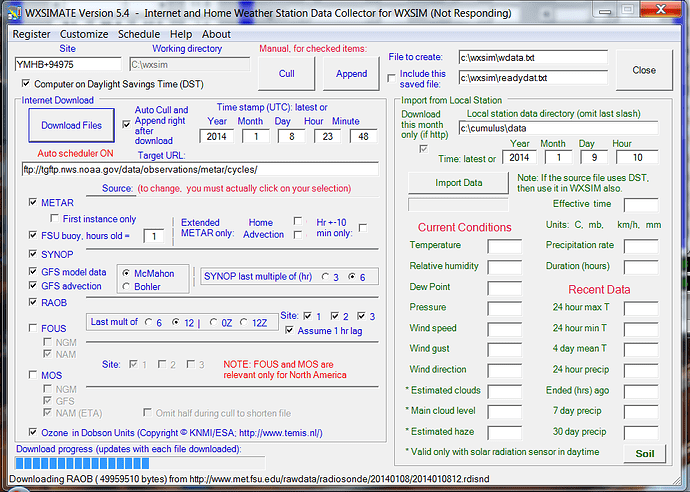

OK, the new versions (consider them “beta”, as there could be more tweaking). Just get these two files and use to replace the ones you have:

www.wxsim.com/wxsim.exe

www.wxsim.com/wret.exe

Hopefully, they are bug free (in fact, I did a workaround in WXSIM for an error 62 which one or two people reported in 12.11). In any case, it seems to be a real improvement, over 12.11 and anything since I started with the learning routine (and autolearn).

Let me know how it goes!

Tom